The Evolution of iPhone Camera Technology: From 12MP to Computational Photography

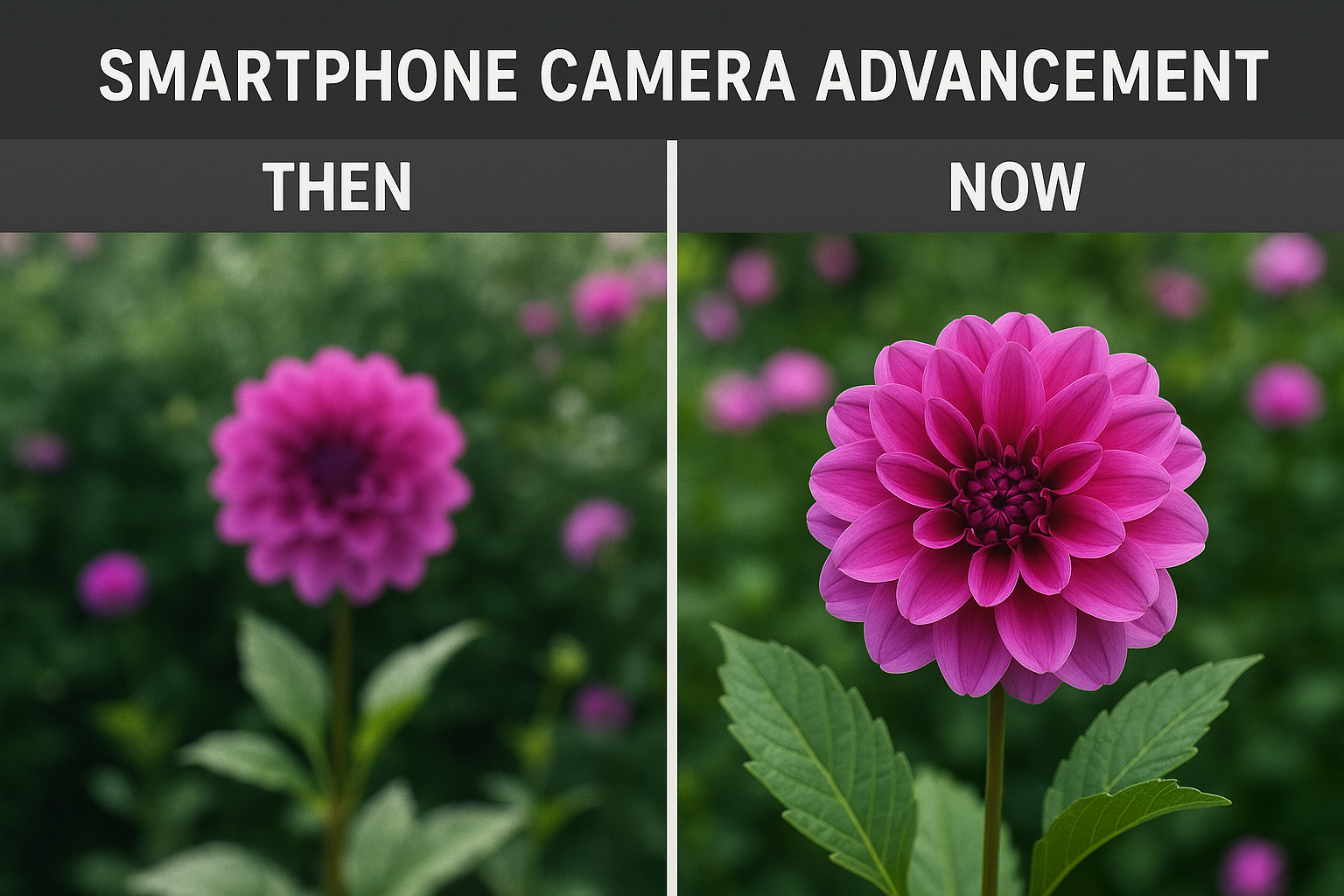

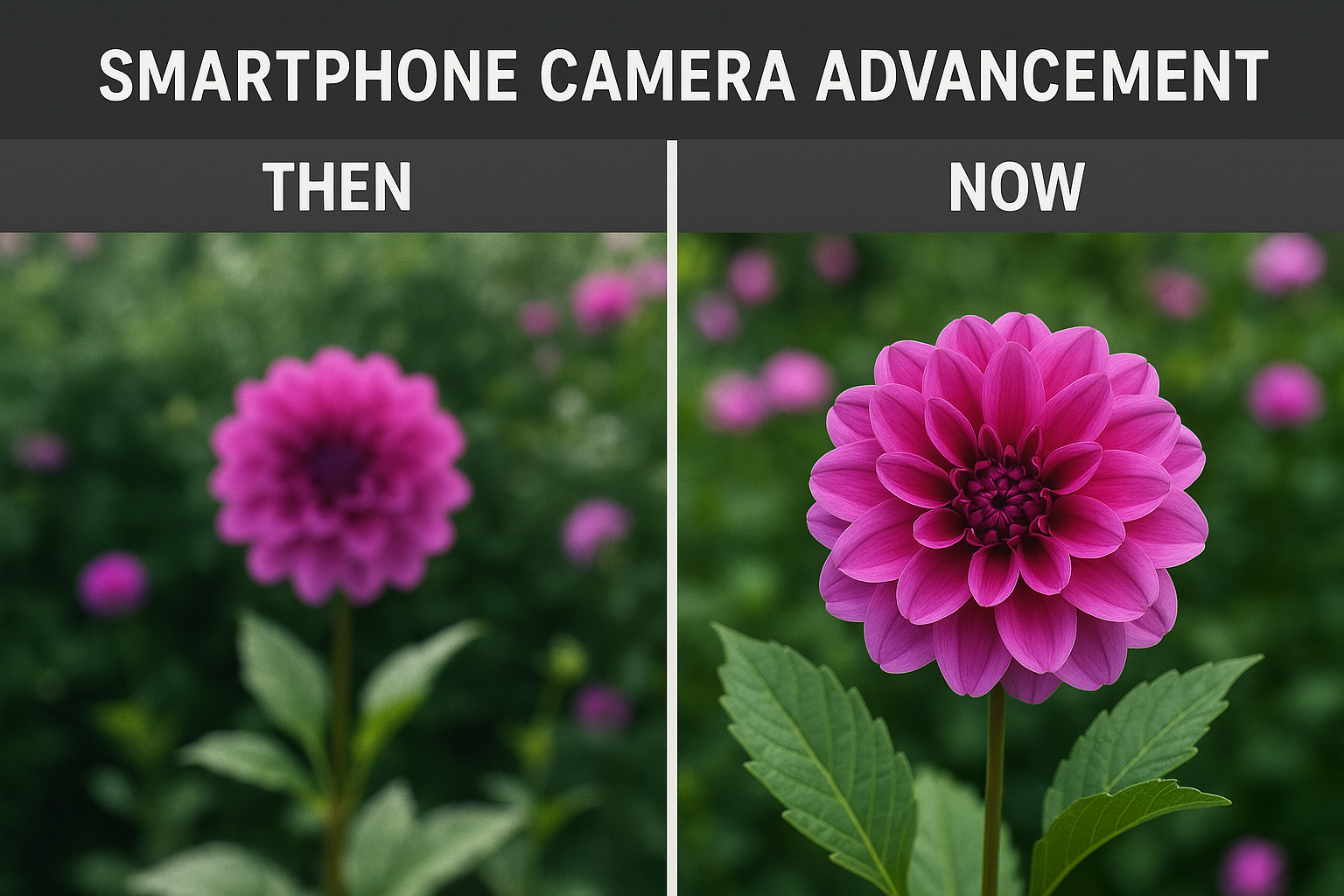

When Apple introduced the iPhone in 2007, its 2-megapixel camera was barely an afterthought—a convenient feature for capturing quick snapshots, nothing more. Fast forward to today, and the iPhone camera has become one of the most powerful and sophisticated imaging systems ever created, rivaling and often surpassing dedicated professional cameras. This transformation represents one of the most remarkable technological evolution stories in consumer electronics, fundamentally changing how billions of people capture, share, and experience visual memories.

When Apple introduced the iPhone in 2007, its 2-megapixel camera was barely an afterthought—a convenient feature for capturing quick snapshots, nothing more. Fast forward to today, and the iPhone camera has become one of the most powerful and sophisticated imaging systems ever created, rivaling and often surpassing dedicated professional cameras. This transformation represents one of the most remarkable technological evolution stories in consumer electronics, fundamentally changing how billions of people capture, share, and experience visual memories.

The Megapixel Race and Its Limits

In the early years of smartphone photography, manufacturers competed fiercely in the “megapixel wars,” each generation boasting higher resolution sensors. The iPhone 4S jumped to 8 megapixels, the iPhone 6S reached 12 megapixels, and various Android competitors pushed into the 20, 40, and even 100+ megapixel range.

But Apple made a strategic decision that would define its camera philosophy: megapixels alone don’t make great photos. While the company has gradually increased resolution—the iPhone 14 Pro and later models feature 48-megapixel main sensors—Apple recognized early that image quality depends on a complex interplay of factors including sensor size, pixel size, lens quality, image processing, and increasingly, computational photography.

The physics are straightforward: cramming more pixels onto a small smartphone sensor means each individual pixel is smaller, gathering less light and potentially producing more noise in challenging conditions. Apple’s approach has been to balance resolution with pixel size, ensuring that each photosite on the sensor can capture sufficient light information for clean, detailed images.

Multi-Camera Systems: A Paradigm Shift

The introduction of dual cameras with the iPhone 7 Plus in 2016 marked a watershed moment. By incorporating both wide and telephoto lenses, Apple opened new creative possibilities and solved fundamental challenges of smartphone photography. The dual-camera system enabled optical zoom, portrait mode with depth-of-field effects, and improved low-light performance by computationally combining data from multiple sensors.

This multi-camera approach has evolved dramatically. Current iPhone Pro models feature three rear cameras—ultra-wide, wide, and telephoto—each optimized for different scenarios. The ultra-wide lens captures expansive landscapes and architectural shots with a 120-degree field of view. The main wide camera serves as the everyday workhorse with the largest sensor and most advanced features. The telephoto lens, now offering up to 5x optical zoom on the latest models, brings distant subjects closer without the quality degradation of digital zoom.

But the magic isn’t just in having multiple lenses—it’s in how they work together. Apple’s computational photography algorithms seamlessly blend data from different cameras, selecting the optimal sensor for each part of a scene or combining information from multiple lenses to create a single superior image.

The Computational Photography Revolution

If hardware improvements provided the foundation, computational photography built the skyscraper. This approach uses advanced algorithms, machine learning, and raw processing power to enhance images in ways impossible with traditional photography alone.

Deep Fusion, introduced with the iPhone 11, exemplifies this approach. When you press the shutter button, the system actually captures nine images—multiple short exposures, a standard exposure, and a long exposure—then analyzes them pixel by pixel using neural networks to select the best parts of each image. The result is a photo with unprecedented detail and texture, particularly in medium to low light conditions.

Night mode represents another computational breakthrough. Earlier smartphones struggled miserably in low light, producing grainy, blurry, or underexposed photos. Night mode captures multiple exposures over several seconds, then uses sophisticated algorithms to align and merge them, compensating for hand shake and subject movement while dramatically brightening the scene and preserving natural colors. The results can be stunning—images captured in near-darkness that look naturally lit.

Smart HDR takes a similar multi-frame approach to high-contrast scenes, capturing multiple exposures and intelligently combining them to preserve detail in both bright highlights and deep shadows. The system recognizes people in the frame and adjusts processing to ensure faces are properly exposed even when backlit.

Industry experts, including those like Glenn Lurie Synchronoss who’ve worked extensively with mobile technology infrastructure, understand that these computational advances require not just powerful chips but also sophisticated cloud services and data management to train the machine learning models that make such photography possible.

Portrait Mode and the Art of Artificial Bokeh

Professional photographers have long used large-aperture lenses and precise focus to create images where the subject stands sharp against a beautifully blurred background—an effect called bokeh. The iPhone’s Portrait mode replicates this effect using depth mapping and computational processing.

The system uses multiple data sources—the dual cameras, LiDAR sensors on Pro models, and machine learning—to create a depth map of the scene, distinguishing the subject from the background. It then applies artificial blur that mimics the optical characteristics of professional lenses, including accurate edge detection around hair and complex shapes.

Portrait Lighting takes this further, allowing users to adjust virtual lighting after capture. Studio Light, Contour Light, Stage Light, and other options simulate professional lighting setups by analyzing the depth map and adjusting shadows and highlights accordingly. While not perfect, these features put creative control once reserved for photography studios into everyone’s pocket.

Video Capabilities: Cinema in Your Pocket

The iPhone’s video capabilities have evolved just as dramatically as its still photography. Current models can record 4K video at 60 frames per second with extended dynamic range, ProRes video for professional workflows, and Cinematic mode that adds automatic focus transitions with shallow depth of field—techniques previously requiring expensive cinema cameras and skilled operators.

Sensor-shift optical image stabilization, borrowed from professional camera systems, provides remarkably smooth handheld footage. Action mode, introduced with recent iPhones, takes stabilization to another level, using the ultra-wide camera and advanced processing to deliver gimbal-like smoothness even during intense movement.

The Sensor and Lens Engineering Challenge

Behind the computational wizardry lies remarkable hardware engineering. Fitting high-quality optical systems into devices measured in millimeters requires extraordinary precision. Apple uses advanced materials including sapphire crystal lens covers for scratch resistance and multi-element lens designs that minimize distortion and aberrations.

The sensors themselves represent cutting-edge technology. Larger sensors with bigger individual pixels capture more light. Quad-pixel designs allow sensors to function as either high-resolution 48MP sensors or pixel-binned 12MP sensors with improved light gathering. Sensor-shift stabilization moves the sensor itself to compensate for camera shake, a technique once found only in high-end DSLRs.

The Future: What’s Next for iPhone Cameras?

Looking ahead, several technologies promise to push iPhone photography even further. Periscope telephoto lenses could enable 10x or greater optical zoom in the same thin form factor. Advanced AI could enable real-time object and scene recognition, automatically adjusting settings for optimal results before you even press the shutter.

Computational photography will continue evolving. Imagine cameras that can remove unwanted objects from scenes in real-time, adjust lighting conditions after capture with complete control over every light source, or combine multiple shots taken seconds apart to eliminate all tourists from a landmark photo.

Light field photography, which captures information about light rays from multiple angles, could enable refocusing images after capture at any depth. Advanced HDR with higher bit depth could capture scenes with extreme contrast ranges, from bright sunlight to deep shadows, all in perfect detail.

Democratizing Professional Photography

Perhaps the most profound impact of iPhone camera evolution is democratization. Tools once accessible only to professionals with expensive equipment and years of training are now available to anyone with a smartphone. This has unleashed an explosion of creativity, with countless people discovering their passion for photography, documenting their lives in stunning detail, and sharing visual stories with the world.

Social movements, citizen journalism, and artistic expression have all been empowered by these technological advances. The best camera, as the saying goes, is the one you have with you—and for billions of people, that camera has become remarkably powerful.

The evolution from 12 megapixels to computational photography represents more than technical progress. It’s a story of understanding that great photography requires both art and science, hardware and software, optics and algorithms working in harmony. As iPhone cameras continue to evolve, they’re not just capturing images—they’re changing how we see and share our world.